If both nodes are up, but can’t reach each other, and continue to perform writes, you end up with a split-brain situation. In the case of a 2-node control plane, when one node can’t reach the other, it doesn’t know if the other node is dead (in which case it may be OK for this surviving node to keep doing updates to the database) or just not reachable. That is, going from a single-node control plane to a 2-node control plane makes availability worse, not better. So a two-node control plane requires not just one node to be available, but both the nodes (as the integer that is “more than half” of 2 is …2). Etcd uses a quorum system, requiring that more than half the replicas are available before committing any updates to the database. This information is stored as key-value pairs in the etcd database.

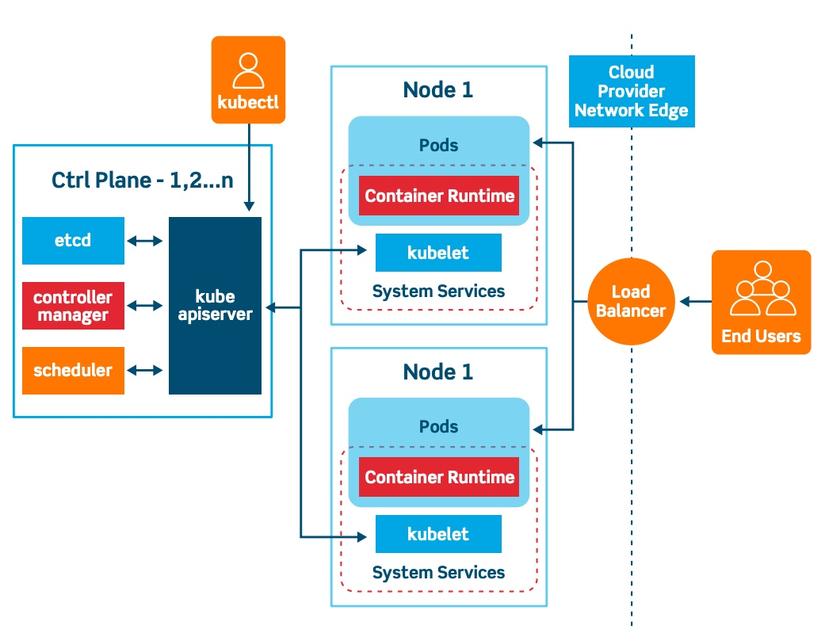

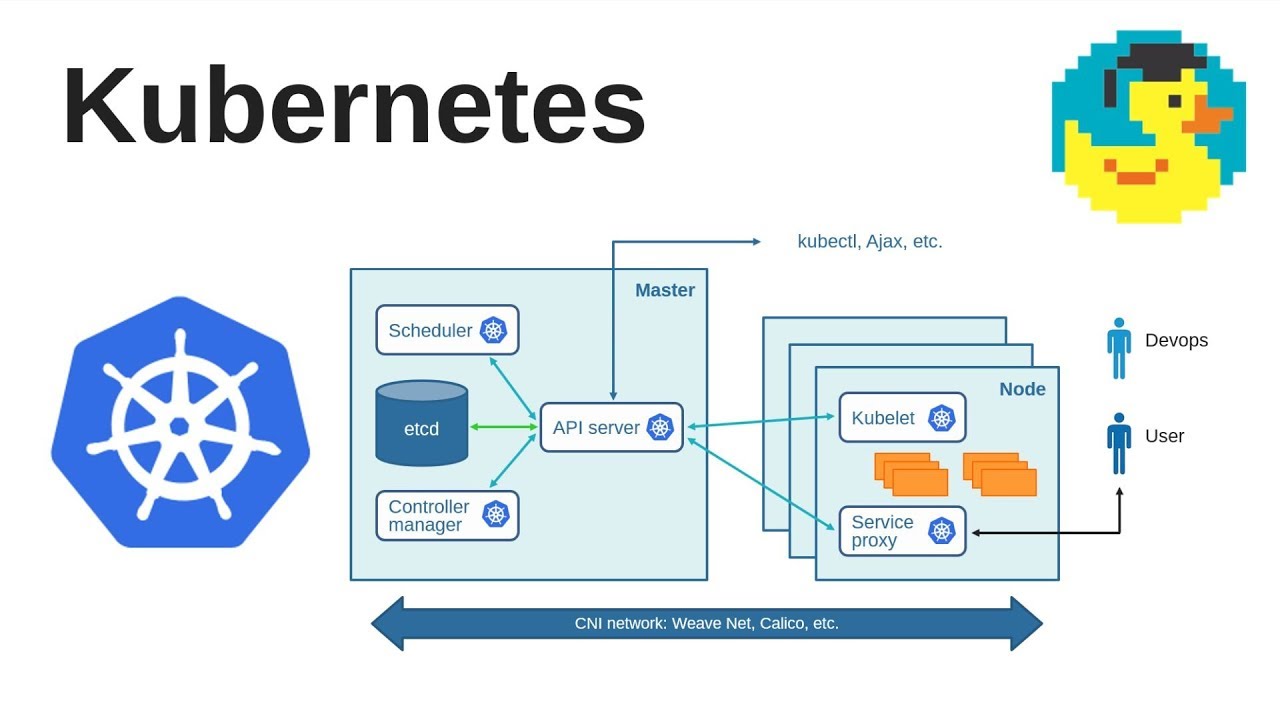

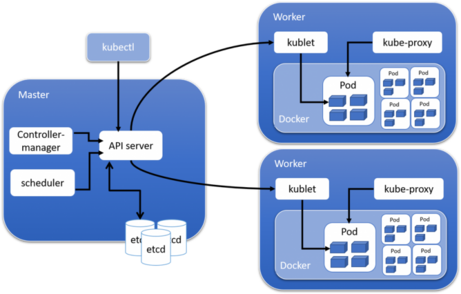

It’s not quite as simple as “more is better.” One of the functions of the control plane is to provide the datastore used for the configuration of Kubernetes itself. But how many nodes should you use? May the Odds Be Ever in Your Favor So how do you ensure the control plane is highly available? Kubernetes achieves high availability by replicating the control plane functions on multiple nodes. You also eliminate the chance that a vulnerability enables a workload to access the secrets of the control plane - which would give full access to the cluster.) (You can schedule pods on the control plane nodes, but it is not recommended for production clusters - you do not want workload requirements to take resources from the components that make Kubernetes highly available. Without a fully functioning control plane, a cluster cannot make any changes to its current state, meaning no new pods can be scheduled. As such, ensuring high availability for the control plane is critical in production environments. The control plane runs the components that allow the cluster to offer high availability, recover from worker node failures, respond to increased demand for a pod, etc. rw- 1 root root 3792 May 20 00:08 kube-apiserver.A Kubernetes cluster generally consists of two classes of nodes: workers, which run applications, and control plane nodes, which control the cluster - scheduling jobs on the workers, creating new replicas of pods when the load requires it, etc. The connection to the server 10.0.0.2:6443 was refused - did you specify the right host or sudo mv /tmp/kube-apiserver.yaml ll /etc/kubernetes/manifests/ rw- 1 root root 3792 May 20 00:08 sudo mv /etc/kubernetes/manifests/kube-apiserver.yaml ll /etc/kubernetes/manifests/ĪPI Server is down now- k get pods -n kube-system rw- 1 root root 1384 May 12 23:24 kube-scheduler.yaml rw- 1 root root 3315 May 12 23:24 kube-controller-manager.yaml The advantage of this method is - you can stop the kube-apiserver as long as the file is removed from manifest folder. Move the kube-apiserver manifest file from /etc/kubernetes/manifests folder to a temporary folder. enable-admission-plugins=NodeRestriction client-ca-file=/etc/kubernetes/pki/ca.crt rw- 1 root root 1384 Sep 29 02:30 kube-scheduler.yamlĪnd the kube-apiserver spec: apiVersion: v1 rw- 1 root root 3496 Sep 29 02:30 kube-controller-manager.yaml rw- 1 root root 3863 Oct 14 00:13 kube-apiserver.yaml <- Here Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"ĭrwxr-xr-x 2 root root 4096 Oct 14 00:13. Creating static Pod manifest for "kube-scheduler" Creating static Pod manifest for "kube-controller-manager" Creating static Pod manifest for "kube-apiserver"

Using manifest folder "/etc/kubernetes/manifests" (*) When you run kubeadm init you should see the creation of the manifests for the control plane static Pods. So, because you can't restart pods in K8S you'll have to delete it: kubectl delete pod/kube-apiserver-master-k8s -n kube-systemĪnd a new pod will be created immediately. Kube-controller-manager-master-k8s 1/1 Running. With this setup the kubeapi-server is running as a pod on the master node: kubectl get pods -n kube-system Did you download and installed the Kubernetes Controller Binaries directly?ġ ) If so, check if the rvice systemd unit file exists: cat /etc/systemd/system/rviceĢ ) If not, you probably installed K8S with kubeadm.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed